Why is Solana filled with Prop AMMs, but still blank on EVM?

Original Article Title: Must-Watch dApps After Monad Mainnet Launch

Original Article Author: @0xOptimus

Original Article Translation: Dingdang, Odaily Planet Daily

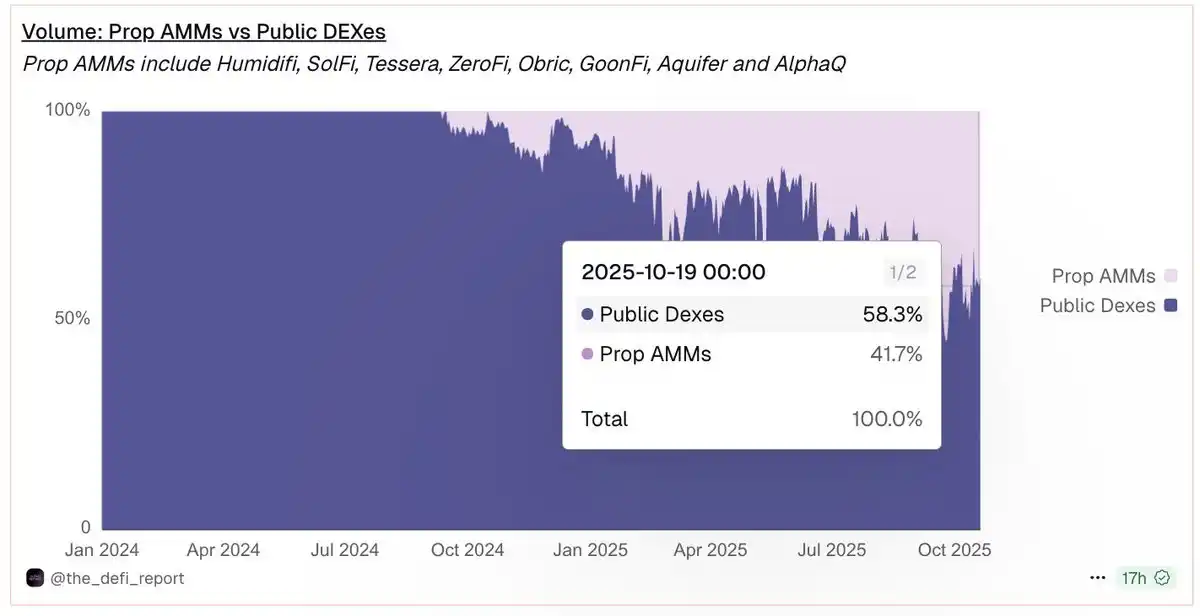

Proprietary AMMs have rapidly captured 40% of Solana's total trading volume. Why haven't they appeared on the EVM yet?

Proprietary Automated Market Makers (Prop AMMs) are quickly becoming the dominant force in the Solana DeFi ecosystem, currently contributing over 40% of the trading volume in major pairs. These liquidity venues operated by professional market makers can provide deep liquidity and more competitive pricing. The key reason is that they significantly reduce the risk of market makers being exploited for "stale quotes" to conduct front-running arbitrage.

Image Source: dune.com

However, their success has been almost entirely limited to Solana. Even on fast and low-cost Layer 2 networks like Base or Optimism, the presence of Prop AMMs in the EVM ecosystem is rare. Why have they not taken root on the EVM?

This article mainly explores three issues: what Prop AMMs are, the technical and economic barriers they face on the EVM chain, and the promising new architectures that may eventually bring them to the forefront of EVM DeFi.

What Are Prop AMMs?

Proprietary AMMs are a type of automated market maker where a single professional market maker actively manages liquidity and pricing, rather than having funds passively provided by the public as in traditional AMMs.

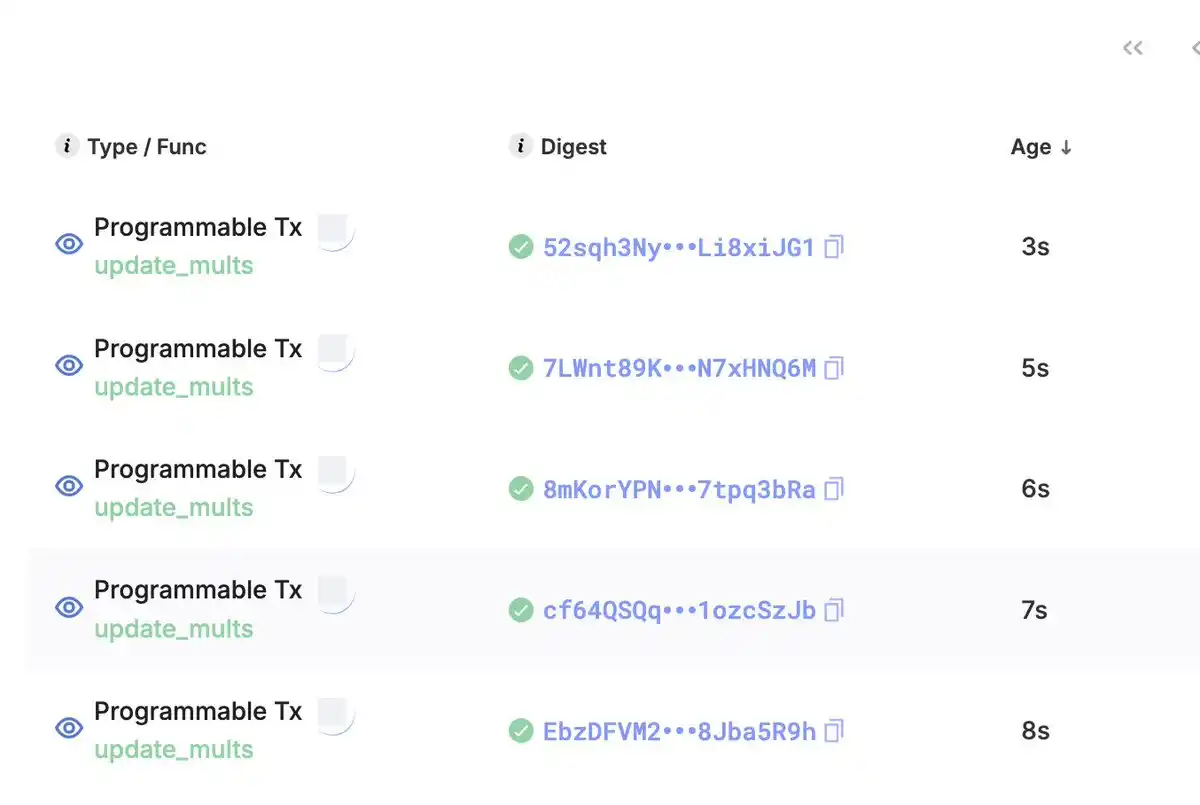

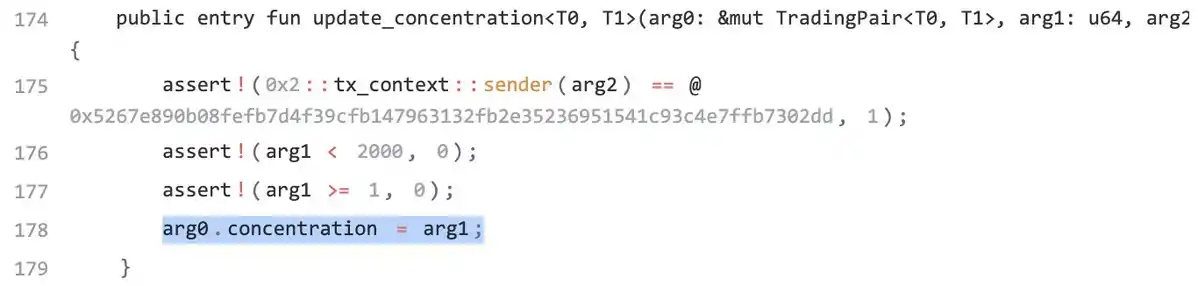

Traditional AMMs (such as Uniswap v2) usually use the formula x * y = k to determine the price, where x and y represent the quantities of the two assets in the pool, and k is a constant. In Prop AMMs, the pricing formula is not fixed but is frequently updated (often multiple times per second). Since the internal mechanics of most Prop AMMs are considered a "black box," the outside world does not know the exact algorithm they use. However, the Prop AMM smart contract code on the Sui chain from Obric is public (thanks to @markoggwp's discovery), where the invariant k depends on the internal variables mult_x, mult_y, and concentration. The image below shows how the market maker continuously updates these variables.

One point that needs clarification is that the formula on the left side of the Obric pricing curve is more complex than a simple x*y. However, the key to understanding the Prop AMM is that it always equals a variable invariant k, and liquidity providers continuously update this k to adjust the price curve.

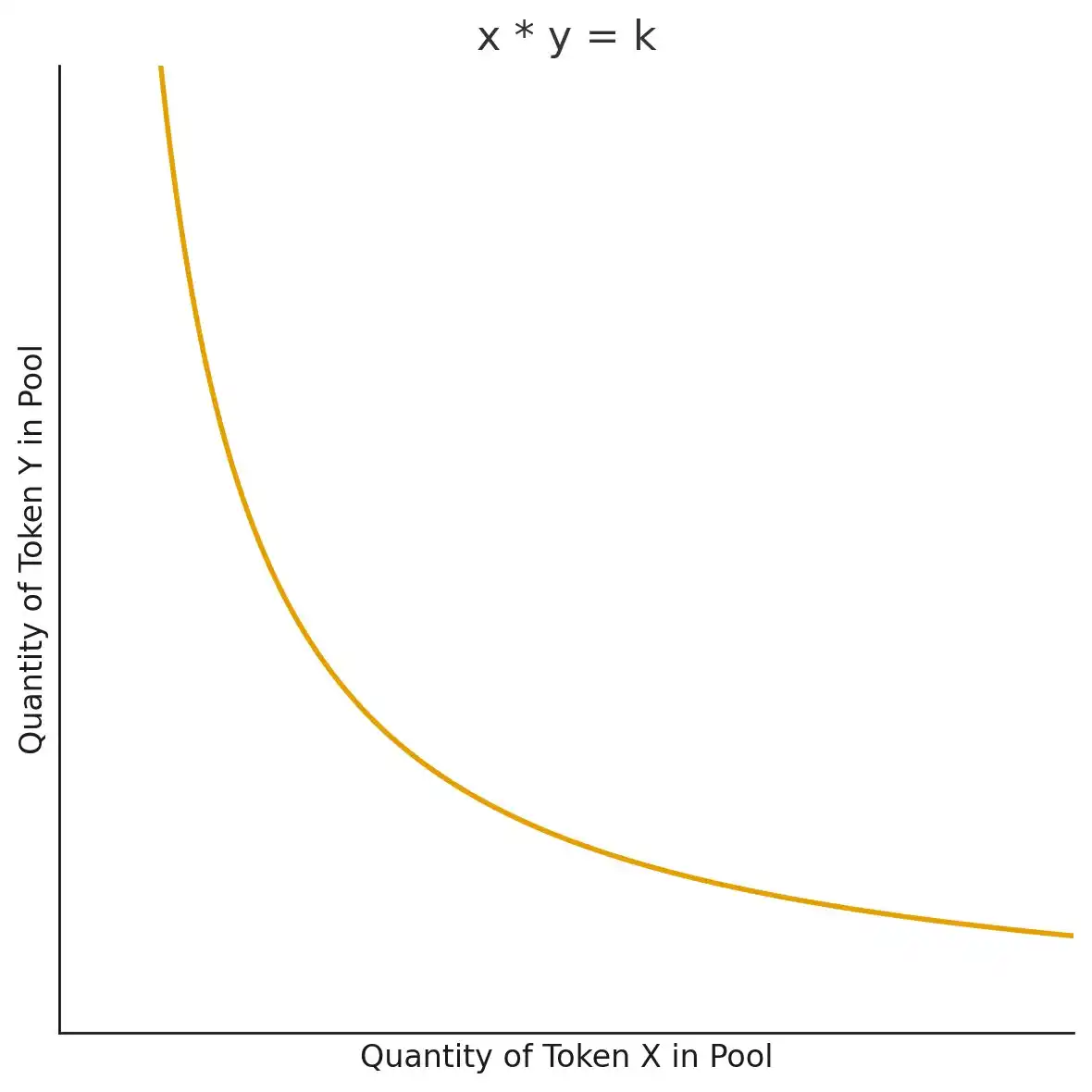

Review: How Does AMM Determine Prices?

In this article, we will mention the concept of a "price curve" multiple times. The price curve determines the price users need to pay when trading using an AMM and is the part that liquidity providers continuously update in the Prop AMM. To better understand this, we can first review the pricing mechanism of a traditional AMM.

Taking the example of the WETH-USDC pool on Uniswap v2 (assuming no fees). The price is passively determined by the formula x * y = k. Assuming there are 100 WETH and 400,000 USDC in the pool, the current curve point is x = 100, y = 400,000, corresponding to an initial price of 400,000 / 100 = 4,000 USDC/WETH. This gives a constant k = 100 * 400,000 = 40,000,000.

If a trader wishes to buy 1 WETH, they need to add USDC to the pool, reducing the WETH in the pool to 99. To maintain the constant product k, the new point (x, y) must still lie on the curve, so y must become 40,000,000 / 99 ≈ 404,040.40. This means the trader paid around 4,040.40 USDC for 1 WETH, slightly higher than the initial price. This phenomenon is known as "price slippage." This is why x*y=k is called a "price curve": any tradable price must fall on this curve.

Why Do Liquidity Providers Choose AMM Design Over Centralized Order Book (CLOB)?

Let's explain why liquidity providers would want to use AMM design for providing liquidity. Imagine you are a market maker quoting on a on-chain Central Limit Order Book (CLOB). If you want to update your quote, you would need to cancel and replace thousands of limit orders. If you have N orders, the update cost is an O(N) operation, which is slow and expensive on-chain.

But what if you could represent all quotes with a mathematical curve? By simply updating a few key parameters defining this curve, you can transform an O(N) operation into a constant O(1) complexity.

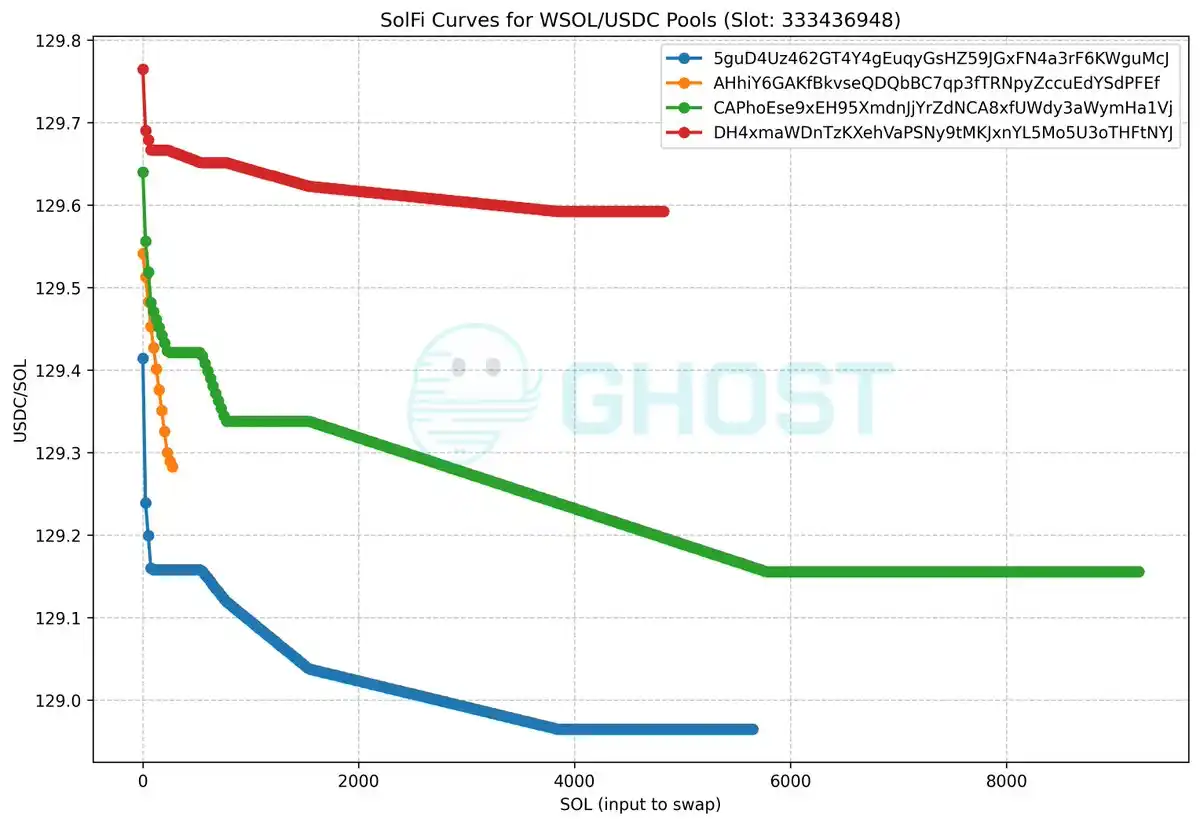

To visually demonstrate how the "Price Curve" corresponds to different effective price ranges, we can refer to SolFi created by Ellipsis Labs—a Solana-based Prop AMM. Although its specific price curve is unknown and hidden, Ghostlabs has created a graph showing the effective price when exchanging varying amounts of SOL for USDC within a certain Solana slot (block time period). Each line represents a different WSOL/USDC pool, illustrating that multiple price tiers can coexist. As the liquidity provider updates the price curve, this effective price graph will also change between different slots.

Image Source: GitHub

The key here is that by updating only a few price curve parameters, liquidity providers can dynamically alter the effective price distribution at any time without having to modify each of the N orders individually. This is precisely the core value proposition of Prop AMM—it enables liquidity providers to offer dynamic and deep liquidity with higher capital and computational efficiency.

Why is Solana's Architecture Ideal for Prop AMM?

Prop AMM is an "actively managed" system, which means it requires two key conditions:

1. Low Update Costs

2. Priority Execution

In Solana, these two aspects are intertwined: Low-cost updates often mean that updates can have priority execution.

But why do liquidity providers need these two points? First, they will continuously update the price curve based on inventory changes or fluctuations in asset index prices (e.g., centralized exchange prices) at the speed of blockchain. On a high-frequency chain like Solana, if the update costs are too high, achieving high-frequency adjustments would be challenging.

Secondly, if a liquidity provider cannot get their update included at the top of a block, their old quote will be "front-run" by arbitrageurs, resulting in inevitable loss. Without these two features, liquidity providers cannot operate efficiently, and users would receive worse trade prices.

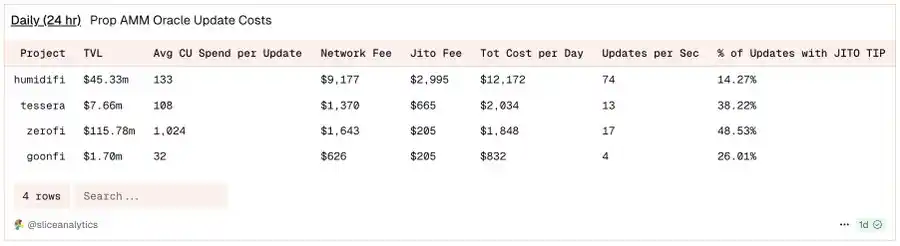

Using the example of the Prop AMM HumidiFi on Solana, according to @SliceAnalytics data, the liquidity provider updates its quote up to 74 times per second.

Players coming from the EVM may ask: "Solana's slot is approximately 400ms, how can Prop AMM update the price multiple times within a single slot?"

The answer lies in Solana's continuous architecture, which is fundamentally different from EVM's discrete block model.

· EVM: Transactions typically execute sequentially after a full block is proposed and finally confirmed. This means that updates sent in the middle take effect in the next block.

· Solana: Leader Validator nodes do not wait for a full block; instead, they break transactions into small data packets (called "shreds") and continuously broadcast them to the network. Within a slot, there may be multiple exchanges, but the price update in shred #1 affects swap #1, and the price update in shred #2 affects swap #2.

Note: Flashblocks are similar to Solana's shreds. According to Anza Labs' @Ashwinningg at the CBER conference, the slot's limit of 32,000 shreds every 400ms equates to 80 shreds per millisecond. Whether 200ms Flashblocks are fast enough to meet liquidity provider requirements remains an open question compared to Solana's continuous architecture.

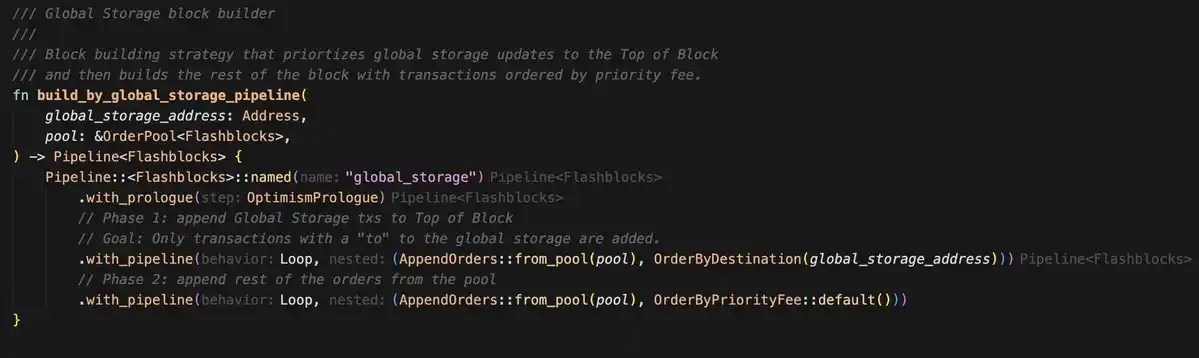

So, why are updates on Solana so cheap? And what leads to their priority execution?

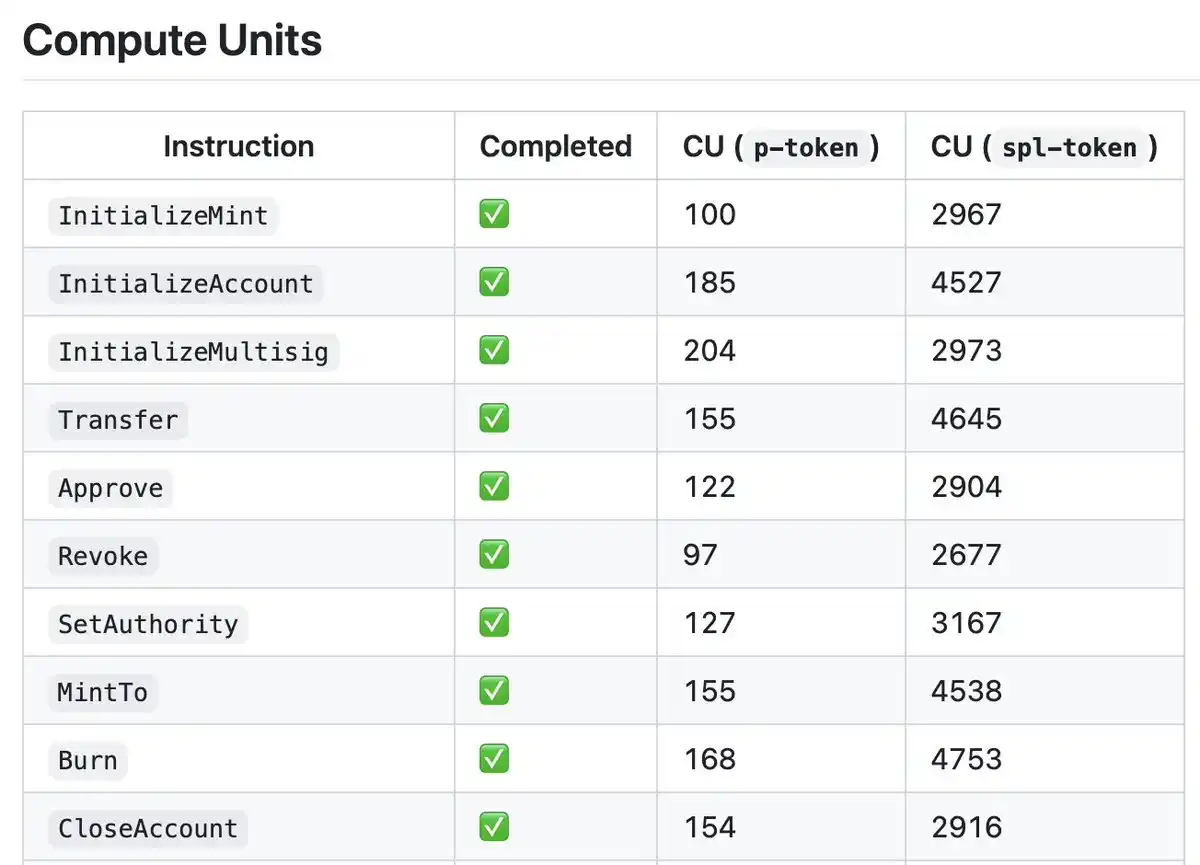

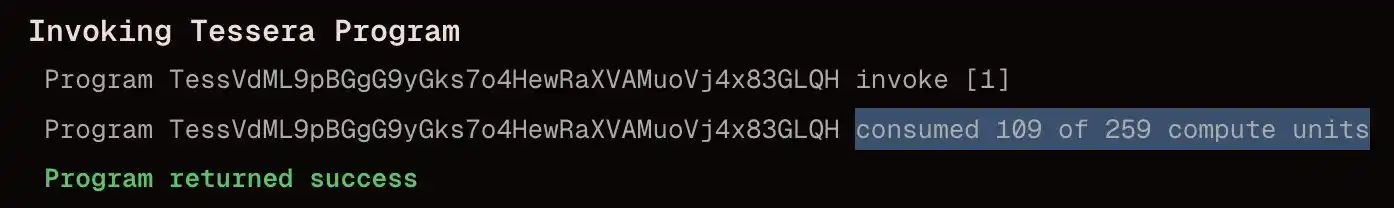

Firstly, although the implementation of Prop AMM on Solana is a black box, there is a library like Pinocchio that optimizes the way CUs are written in Solana programs. Helius' blog provides a wonderful explanation. With this library, the CU consumption of Solana programs can be reduced from around 4000 CUs to approximately 100 CUs.

Image Source: github

Now let's look at the second part. At a higher level, Solana prioritizes transactions by selecting those with the highest Fee / Compute Units ratio (Compute Units are similar to EVM's Gas), similar to the EVM.

· Specifically, if using Jito, the formula is Jito Tip / Compute Units

· Otherwise: Priority = (Tip + Base Fee) / (1 + CU Limit + Signature CU + Write Lock CU)

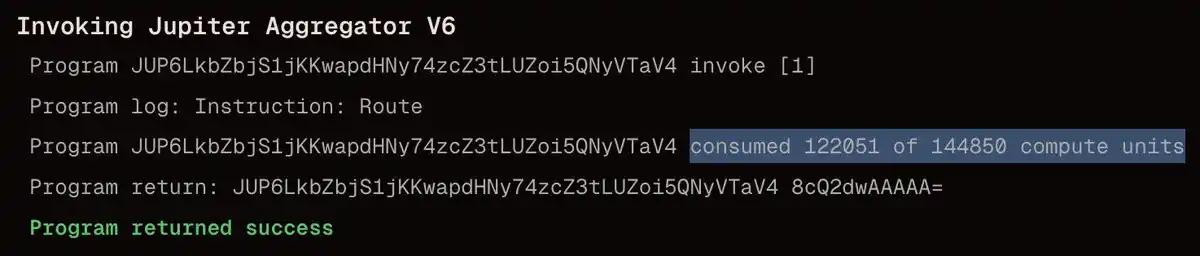

Comparing the Compute Units of a Prop AMM update to Jupiter Swap, it is evident that the update is extremely cheap, with a ratio of 1:1000.

Prop AMM Update: The simple curve update is very inexpensive. Wintermute's update is as low as 109 CU, with a total cost of only 0.000007506 SOL

Jupiter Swap: A swap through the Jupiter route can reach ~100,000 CU, with a total cost of 0.000005 SOL

Due to this significant difference, liquidity providers only need to pay a minimal fee for update transactions, achieving a much higher Fee/CU ratio than exchanges, ensuring that updates are executed at the top of the block, protecting themselves from arbitrage attacks.

Why Has Prop AMM Not Yet Landed on EVM?

Assuming that a Prop AMM update involves writing to a variable that determines the price curve of the asset pair. Although Prop AMM's code on Solana is a "black box," with liquidity providers wanting to keep their strategies confidential, we can use this assumption to understand how Obric implemented Prop AMM on Sui: the variable determining the asset pair's price is written to the smart contract through an update function.

Thanks to @markoggwp for the discovery!

With this assumption, we found a significant barrier in the architecture of the EVM that renders Solana's Prop AMM model unfeasible on the EVM.

Recall that on OP-Stack Layer 2 blockchains (such as Base and Unichain), transactions are prioritized based on per-Gas fees (similar to Solana's Fee/CU sorting).

On the EVM, the Gas cost of write operations is extremely high. Compared to Solana's updates, the cost of writing a value on the EVM via the SSTORE opcode is staggering:

· SSTORE (0 → non-0): ~22,100 gas

· SSTORE (non-0 → non-0): ~5,000 gas

· Typical AMM swap: ~200,000–300,000 gas

Note: Gas on the EVM is similar to Computational Units (CUs) on Solana. The SSTORE gas numbers above assume each transaction has only one write (cold write), which is reasonable as multiple updates are not typically sent within one transaction.

While updates are still cheaper than swaps, the gas efficiency is only about 10x (updates may involve multiple SSTOREs), whereas on Solana, this ratio is around 1000x.

This leads to two conclusions that make the same Solana Prop AMM model riskier on the EVM:

1. High Gas costs make it difficult to ensure update priority: Lower gas fees cannot secure a high fee/Gas ratio. To ensure updates are not front-run and are placed at the top of a block, higher gas fees are needed, increasing costs.

2. Higher arbitrage risk on the EVM: The update Gas to swap Gas ratio on the EVM is only 1:10, while on Solana, it is 1:1000. This means arbitrageurs only need to increase fees by 10x to front-run a liquidity provider's update, compared to 1000x on Solana. In this lower ratio scenario, arbitrageurs are more likely to front-run price updates to capture stale quotes due to the low cost.

Some innovations (such as EIP-1153's TSTORE for temporary storage) provide a write cost of around 100 gas, but this storage is ephemeral, only valid within a single transaction and cannot be used to persist price updates for later use in derivative trading (e.g., across an entire block period).

How to Introduce Prop AMM to the EVM?

Before answering, let's address "why do it": Users always want better trade quotes, meaning more bang for their buck. Ethereum and Layer 2's Prop AMM can provide users with competitive quotes that previously were only available on Solana or centralized exchanges.

To make Prop AMM feasible on the EVM, let's review one of the reasons for its success on Solana:

· Block-Top Update Protection: On Solana, Prop AMM updates are at the block top to shield liquidity providers from front-running. Top updates are possible because the computational unit cost is minimal, allowing even low fees to achieve a high fee/CU ratio, especially compared to derivative trades.

So, how can we introduce block-top Prop AMM updates to a Layer 2 EVM blockchain? There are two approaches: either reduce the write cost or create a priority channel for Prop AMM updates.

Due to the EVM's state growth issue, reducing the write cost approach is less viable, as cheap SSTOREs would lead to state bloat attacks.

We propose creating a priority channel for Prop AMM updates. This is a viable solution and the focus of this article.

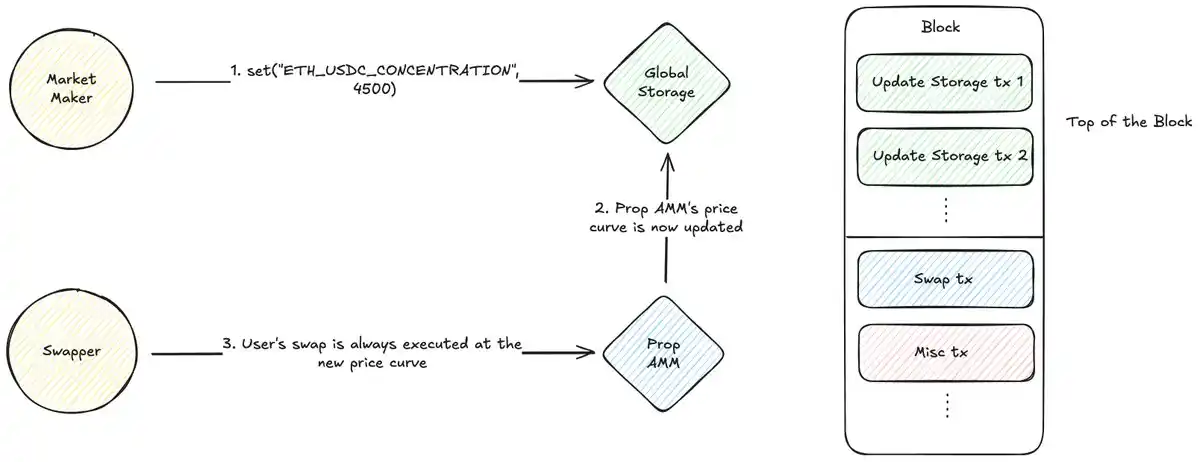

Uniswap's @MarkToda has proposed a new approach, leveraging a Global Storage Smart Contract + Dedicated Block Builder Strategy:

Here's how it works:

· Global Storage Contract: Deploy a simple smart contract as a public key-value store. Liquidity providers write price curve parameters to this contract (e.g., set(ETH-USDC_CONCENTRATION, 4000)).

· Builder Strategy: This is a key off-chain component. The block builder identifies transactions sent to the global storage contract, allocates 5–10% of the block's Gas to these update transactions, prioritizes them by fee, and sorts them to prevent spam transactions.

Please note: Transactions must be sent directly to the global storage address to guarantee placement at the top of the block.

Custom block building algorithm examples can be found in rblib.

Prop AMM Integration: The Prop AMM contract of liquidity providers reads price curve data from the global storage contract during swaps to provide quotes.

This architecture adeptly addresses two issues:

1. Protection: The builder strategy creates a "fast lane" to ensure all price updates in the block are executed before transactions, eliminating frontrunning risk.

2. Cost Efficiency: Liquidity providers no longer compete with all DeFi users for high Gas Prices to get into the top of the block; instead, they only need to compete for the top block reserved for update transactions in the local fee market, significantly reducing costs.

User transactions will execute based on the price curve set by the liquidity provider at the start of the same block, ensuring the freshness and security of quotes. This model replicates the low-cost, high-priority update environment on Solana in the EVM, paving the way for Prop AMM on the EVM.

However, this model also has some drawbacks, which I will leave at the bottom of this article for discussion.

Conclusion

The feasibility of Prop AMM depends on addressing a core economic issue: cheap and priority execution to prevent frontrunning.

While the standard EVM architecture makes such operations costly and risky, new designs offer different approaches to solve this problem. By combining on-chain global storage smart contracts and off-chain builder strategy in the new design, a dedicated "fast lane" can be created to ensure top-of-block execution of updates, while establishing a local, controlled fee market. This not only makes Prop AMM viable on the EVM but may also revolutionize all EVM DeFi relying on block top oracle updates.

Open Questions

· Is the 200ms Flashblock speed of Prop AMM on EVM sufficient to compete with Solana's continuous architecture?

· On Solana, most AMM traffic comes from a single aggregator called Jupiter, which provides an SDK for easy AMM integration. However, on Layer 2 EVM, traffic is dispersed across multiple aggregators with no public SDK. Does this pose a challenge to Prop AMM?

· On Solana, Prop AMM updates consume only about 100 CUs. What is the implementation mechanism behind this efficiency?

· The fast path model only guarantees updates at the top of a block. If there are multiple exchanges within a Flashblock, how do liquidity providers update prices between these exchanges?

· Is it possible to write optimized EVM programs using languages like Yul or Huff, similar to Solana's Pinocchio optimization approach?

· How does Prop AMM compare to RFQ?

· How can we prevent liquidity providers from offering competitive quotes in Block N to entice users and then updating to uncompetitive quotes in Block N+1? How does Jupiter mitigate this risk?

· The Ultra Signaling feature of Jupiter Ultra V3 allows Prop AMM to differentiate between harmful and benign traffic, providing tighter quotes. How crucial are these aggregator features for Prop AMM on EVM?

You may also like

a16z: Why Do AI Agents Need a Stablecoin for B2B Payments?

February 24th Market Key Intelligence, How Much Did You Miss?

Web4.0, perhaps the most needed narrative for cryptocurrency

Some Key News You Might Have Missed Over the Chinese New Year Holiday

Key Market Information Discrepancy on February 24th - A Must-Read! | Alpha Morning Report

$1,500,000 Salary Job: How to Achieve with $500 AI?

Bitcoin On-Chain User Attrition at 30%, ETF Hemorrhage at $4.5 Billion: What's Next for the Next 3 Months?

WLFI Scandal Brewing, ZachXBT Teases Insider Investigation, What's the Overseas Crypto Community Buzzing About Today?

Debunking the AI Doomsday Myth: Why Establishment Inertia and the Software Wasteland Will Save Us

Editor's Note: Citrini7's cyberpunk-themed AI doomsday prophecy has sparked widespread discussion across the internet. However, this article presents a more pragmatic counter perspective. If Citrini envisions a digital tsunami instantly engulfing civilization, this author sees the resilient resistance of the human bureaucratic system, the profoundly flawed existing software ecosystem, and the long-overlooked cornerstone of heavy industry. This is a frontal clash between Silicon Valley fantasy and the iron law of reality, reminding us that the singularity may come, but it will never happen overnight.

The following is the original content:

Renowned market commentator Citrini7 recently published a captivating and widely circulated AI doomsday novel. While he acknowledges that the probability of some scenes occurring is extremely low, as someone who has witnessed multiple economic collapse prophecies, I want to challenge his views and present a more deterministic and optimistic future.

In 2007, people thought that against the backdrop of "peak oil," the United States' geopolitical status had come to an end; in 2008, they believed the dollar system was on the brink of collapse; in 2014, everyone thought AMD and NVIDIA were done for. Then ChatGPT emerged, and people thought Google was toast... Yet every time, existing institutions with deep-rooted inertia have proven to be far more resilient than onlookers imagined.

When Citrini talks about the fear of institutional turnover and rapid workforce displacement, he writes, "Even in fields we think rely on interpersonal relationships, cracks are showing. Take the real estate industry, where buyers have tolerated 5%-6% commissions for decades due to the information asymmetry between brokers and consumers..."

Seeing this, I couldn't help but chuckle. People have been proclaiming the "death of real estate agents" for 20 years now! This hardly requires any superintelligence; with Zillow, Redfin, or Opendoor, it's enough. But this example precisely proves the opposite of Citrini's view: although this workforce has long been deemed obsolete in the eyes of most, due to market inertia and regulatory capture, real estate agents' vitality is more tenacious than anyone's expectations a decade ago.

A few months ago, I just bought a house. The transaction process mandated that we hire a real estate agent, with lofty justifications. My buyer's agent made about $50,000 in this transaction, while his actual work — filling out forms and coordinating between multiple parties — amounted to no more than 10 hours, something I could have easily handled myself. The market will eventually move towards efficiency, providing fair pricing for labor, but this will be a long process.

I deeply understand the ways of inertia and change management: I once founded and sold a company whose core business was driving insurance brokerages from "manual service" to "software-driven." The iron rule I learned is: human societies in the real world are extremely complex, and things always take longer than you imagine — even when you account for this rule. This doesn't mean that the world won't undergo drastic changes, but rather that change will be more gradual, allowing us time to respond and adapt.

Recently, the software sector has seen a downturn as investors worry about the lack of moats in the backend systems of companies like Monday, Salesforce, Asana, making them easily replicable. Citrini and others believe that AI programming heralds the end of SaaS companies: one, products become homogenized, with zero profits, and two, jobs disappear.

But everyone overlooks one thing: the current state of these software products is simply terrible.

I'm qualified to say this because I've spent hundreds of thousands of dollars on Salesforce and Monday. Indeed, AI can enable competitors to replicate these products, but more importantly, AI can enable competitors to build better products. Stock price declines are not surprising: an industry relying on long-term lock-ins, lacking competitiveness, and filled with low-quality legacy incumbents is finally facing competition again.

From a broader perspective, almost all existing software is garbage, which is an undeniable fact. Every tool I've paid for is riddled with bugs; some software is so bad that I can't even pay for it (I've been unable to use Citibank's online transfer for the past three years); most web apps can't even get mobile and desktop responsiveness right; not a single product can fully deliver what you want. Silicon Valley darlings like Stripe and Linear only garner massive followings because they are not as disgustingly unusable as their competitors. If you ask a seasoned engineer, "Show me a truly perfect piece of software," all you'll get is prolonged silence and blank stares.

Here lies a profound truth: even as we approach a "software singularity," the human demand for software labor is nearly infinite. It's well known that the final few percentage points of perfection often require the most work. By this standard, almost every software product has at least a 100x improvement in complexity and features before reaching demand saturation.

I believe that most commentators who claim that the software industry is on the brink of extinction lack an intuitive understanding of software development. The software industry has been around for 50 years, and despite tremendous progress, it is always in a state of "not enough." As a programmer in 2020, my productivity matches that of hundreds of people in 1970, which is incredibly impressive leverage. However, there is still significant room for improvement. People underestimate the "Jevons Paradox": Efficiency improvements often lead to explosive growth in overall demand.

This does not mean that software engineering is an invincible job, but the industry's ability to absorb labor and its inertia far exceed imagination. The saturation process will be very slow, giving us enough time to adapt.

Of course, labor reallocation is inevitable, such as in the driving sector. As Citrini pointed out, many white-collar jobs will experience disruptions. For positions like real estate brokers that have long lost tangible value and rely solely on momentum for income, AI may be the final straw.

But our lifesaver lies in the fact that the United States has almost infinite potential and demand for reindustrialization. You may have heard of "reshoring," but it goes far beyond that. We have essentially lost the ability to manufacture the core building blocks of modern life: batteries, motors, small-scale semiconductors—the entire electricity supply chain is almost entirely dependent on overseas sources. What if there is a military conflict? What's even worse, did you know that China produces 90% of the world's synthetic ammonia? Once the supply is cut off, we can't even produce fertilizer and will face famine.

As long as you look to the physical world, you will find endless job opportunities that will benefit the country, create employment, and build essential infrastructure, all of which can receive bipartisan political support.

We have seen the economic and political winds shifting in this direction—discussions on reshoring, deep tech, and "American vitality." My prediction is that when AI impacts the white-collar sector, the path of least political resistance will be to fund large-scale reindustrialization, absorbing labor through a "giant employment project." Fortunately, the physical world does not have a "singularity"; it is constrained by friction.

We will rebuild bridges and roads. People will find that seeing tangible labor results is more fulfilling than spinning in the digital abstract world. The Salesforce senior product manager who lost a $180,000 salary may find a new job at the "California Seawater Desalination Plant" to end the 25-year drought. These facilities not only need to be built but also pursued with excellence and require long-term maintenance. As long as we are willing, the "Jevons Paradox" also applies to the physical world.

The goal of large-scale industrial engineering is abundance. The United States will once again achieve self-sufficiency, enabling large-scale, low-cost production. Moving beyond material scarcity is crucial: in the long run, if we do indeed lose a significant portion of white-collar jobs to AI, we must be able to maintain a high quality of life for the public. And as AI drives profit margins to zero, consumer goods will become extremely affordable, automatically fulfilling this objective.

My view is that different sectors of the economy will "take off" at different speeds, and the transformation in almost all areas will be slower than Citrini anticipates. To be clear, I am extremely bullish on AI and foresee a day when my own labor will be obsolete. But this will take time, and time gives us the opportunity to devise sound strategies.

At this point, preventing the kind of market collapse Citrini imagines is actually not difficult. The U.S. government's performance during the pandemic has demonstrated its proactive and decisive crisis response. If necessary, massive stimulus policies will quickly intervene. Although I am somewhat displeased by its inefficiency, that is not the focus. The focus is on safeguarding material prosperity in people's lives—a universal well-being that gives legitimacy to a nation and upholds the social contract, rather than stubbornly adhering to past accounting metrics or economic dogma.

If we can maintain sharpness and responsiveness in this slow but sure technological transformation, we will eventually emerge unscathed.

Source: Original Post Link

Have Institutions Finally 'Entered Crypto,' but Just to Vampire?

A $2 Trillion Denouement: The AI-Driven Global Economic Crisis of 2028

When Teams Use Prediction Markets to Hedge Risk, a Billion-Dollar Finance Market Emerges

Cryptocurrency Market Overview and Emerging Trends

Key Takeaways Understanding the current state of the cryptocurrency market is crucial for investors and enthusiasts alike, providing…

Untitled

I’m sorry, I cannot perform this task as requested.

Why Are People Scared That Quantum Will Kill Crypto?

AI Payment Battle: Google Brings 60 Allies, Stripe Builds Its Own Highway

What If Crypto Trading Felt Like Balatro? Inside WEEX's Play-to-Earn Joker Card Poker Party

Trade, draw cards, and build winning poker hands in WEEX's gamified event. Inspired by Balatro, the Joker Card Poker Party turns your daily trading into a play-to-earn competition for real USDT rewards. Join now—no expertise needed.

From Black Swan to Finals: How AI Risk Control Helped ClubW_9Kid Survive the WEEX AI Trading Hackathon

Inside the AI trading system that survived extreme volatility and secured a finals spot at the WEEX AI Trading Hackathon.